Agentic Mode

Delegate complex, multi-step tasks to specialized AI agents that plan, reason, and act autonomously

Kumari AI is built around a core belief: AI should do more than answer questions. It should think, plan, and act handling complex tasks the way a skilled human collaborator would, start to finish.

Agentic Mode is where that vision lives. Instead of sending a prompt to a language model and waiting for a single response, you hand a task to an agent a purpose-built system that reasons through the problem, decides what steps to take, uses tools to execute them, and delivers a complete, structured result.

How Agents Are Different From LLMs

A standard LLM takes your input and produces output in a single pass. It can't check its own work against live data, run searches mid-task, or course-correct when something doesn't pan out.

Agents operate differently:

| Standard LLM | Kumari Agent | |

|---|---|---|

| Works in | A single pass | Multiple steps |

| Searches the web | Only if told to | Autonomously, as needed |

| Adapts mid-task | No | Yes |

| Uses tools | No | Yes (search, code, APIs) |

| Best for | Questions, drafts, summaries | Research, workflows, complex tasks |

The Planning-Execution Cycle

When you invoke an agent, it goes through a deliberate cycle rather than generating immediately:

1. Understand reads your task and identifies what's actually being asked, including implied requirements.

2. Plan breaks the task into an ordered sequence of steps.

3. Execute carries out each step using whatever tools it needs web search, code execution, data retrieval and evaluates results before moving on.

4. Adapt if a step produces unexpected results, the agent adjusts its plan rather than blindly continuing.

5. Deliver consolidates everything into a clear, structured final response.

Agents take longer than a standard LLM response that's expected. They're doing real, multi-step work.

How to Use Agentic Mode

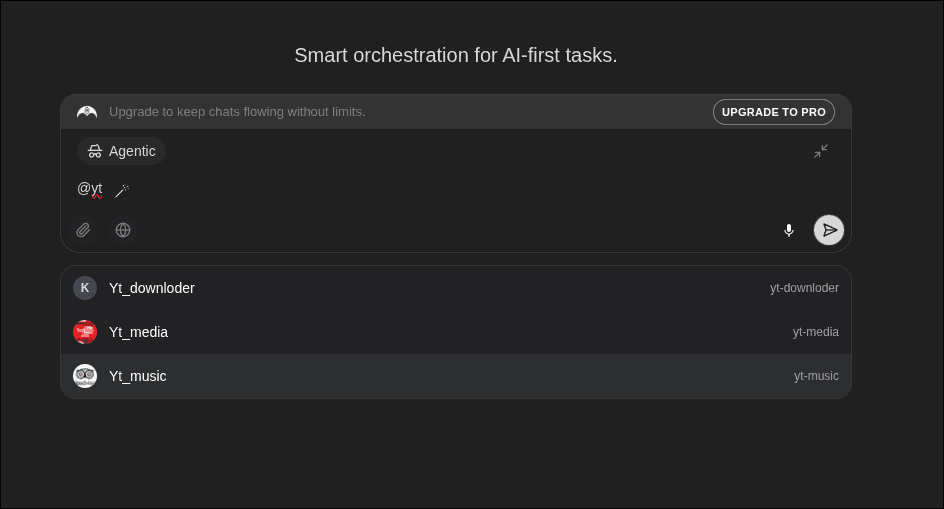

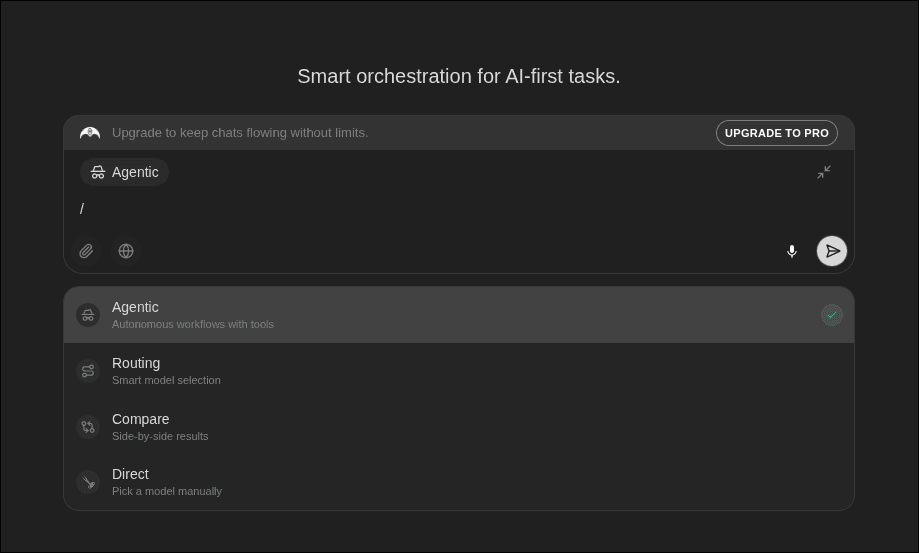

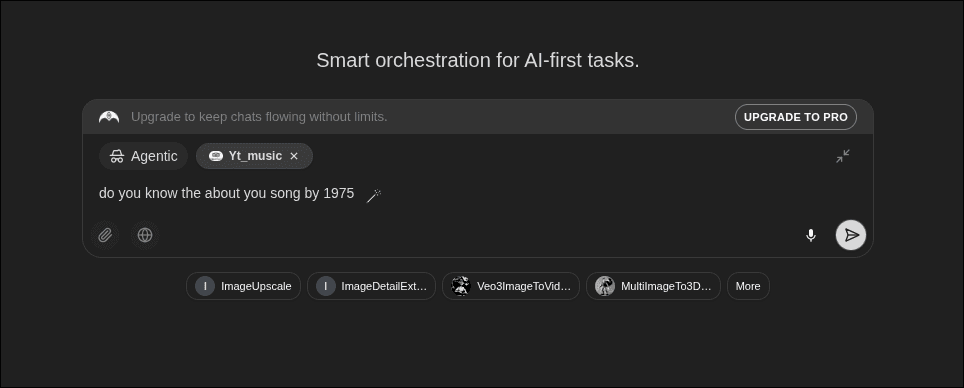

Switch to Agentic Mode

Type / in the chat input and select Agentic.

Describe your task

Write your request with as much context as you can. Agents plan based on what you give them specificity leads to better results.

Review and continue

Once done, you can ask follow-up questions, request refinements, or hand the result to a different agent for the next stage.

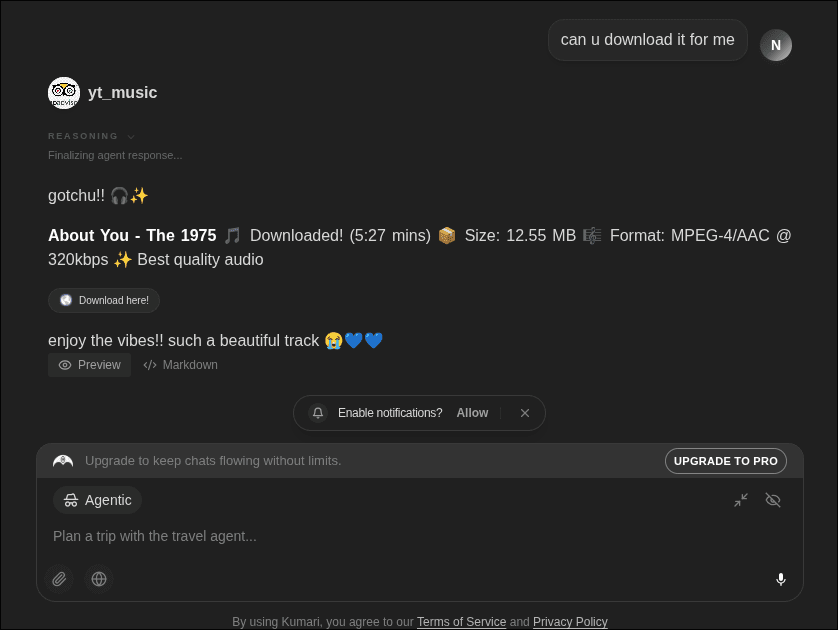

Available Agents

| Agent | Best for |

|---|---|

| Researcher | Deep multi-source research, fact-checking, synthesis |

| Finance | Market data, financial analysis, investment research |

| Coder | Code generation, debugging, explanation, refactoring |

| Writer | Long-form content, editing, tone refinement, creative writing |

| Social | Social content drafting, trend analysis, platform-specific copy |

Writing Good Agent Prompts

- Be specific about output format "summarise as a 5-bullet brief" beats "summarise this"

- State your constraints word limits, source types, structure requirements

- Give context the more the agent knows about your goal, the more targeted its plan

- Scope the task for very large jobs, break them into stages across turns

Credit Usage

Agentic tasks cost more credits than standard responses because the agent runs multiple steps, each consuming compute. Exact cost depends on the number of steps and whether web search is used. The total is shown after the task completes.

If you run agentic tasks regularly, a Custom Pack is the most efficient way to keep your balance topped up.